In recent years, especially since the popularization of ChatGPT, we have heard a lot about artificial intelligence (abbreviated as AI in English or IA in Italian), which has become a major investment theme, even in wealth management. We believe it is a revolution, although, for now, we find it excessive to classify it as “radical.” However, it is certainly not a passing trend. In the financial sector, we have noticed that the term AI has completely replaced the term Machine Learning (ML), which was previously mentioned frequently as a tool for developing investment strategies.

So, it seems fair to ask: what happened to ML, and what is its relationship with AI? We will answer this question with a brief historical excursus, hoping it will be of interest and help clarify the concepts of AI and ML.

In this excursus, we will explain some concepts in a general and non-technical manner, focusing on providing an intuitive understanding rather than a rigorous scientific explanation. ML means statistical learning by a computational machine (essentially a computer). Various computational models exist to achieve this, one of which is Artificial Neural Networks (ANN). The term AI was probably coined from attempts to formalize, through a mathematical/statistical model, the human learning process (or that of mammals in general), which is based on the simplified reproduction of the structure and function of the human brain. The brain consists of trillions of neurons that communicate by emitting electrical impulses decoded by synapses, which transmit chemical signals (neurotransmitters) to the recipient neurons. Depending on the “strength” of the message, the receiving neuron can activate and retransmit the message, creating a path through a dense network of neurons. Synapses are responsible for learning because similar experiences activate the same neural pathways.

One of the earliest models attempting to capture this morphology and functioning was the Perceptron by Rosenblatt (1962), which had only one layer of neurons. Additional layers were added later with techniques for training the network. Each node (stylized as a “biological neuron”) in the ANN performs a calculation and transmits it to the next set of nodes, weighted by a parameter (representing the “synapse”). Training (or learning) consists of presenting input data (such as images, texts, historical series, etc.) to calibrate the model parameters to produce outputs close to actual results (minimizing error). After learning, the ANN can generalize and produce correct outputs with high probability when faced with new inputs. The most commonly used parameter estimation method is backpropagation. However, it revealed a flaw: it gave excessive weight to the parameters of the last layer of nodes, making previous layers irrelevant. Unfortunately, single-layer ANNs could only solve problems of limited complexity. This is why ANNs lost appeal over time, giving way to other ML models such as kernel methods.

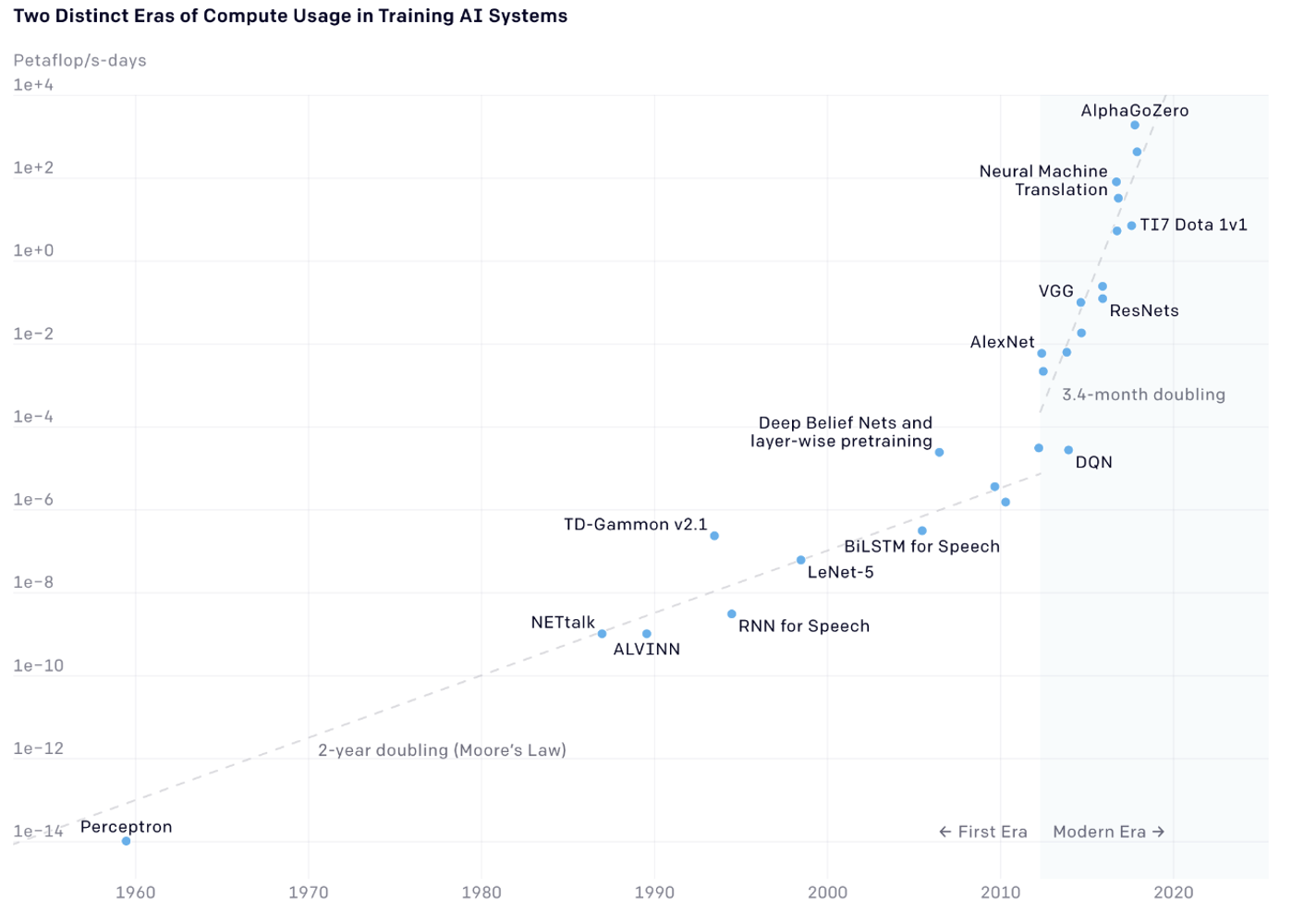

However, in the second decade of this century, new ANN models emerged, and, more importantly, the use of graphic processing unit (GPU) processors for parallel computing and the availability of ever-larger datasets rekindled interest in neural networks. This marked the birth of deep learning (DL), which enabled solving highly complex problems with astonishing applications, such as generating meaningful texts, images, and sounds—what is known as generative artificial intelligence. The increased computing power made it possible to estimate billions of parameters required by multiple layers of artificial neurons. This also explains why NVIDIA, with its latest generation processors such as Hopper and later Blackwell, has experienced the growth we see today. To illustrate the increase in computing capacity, we reference a graph from OpenAI (downloadable from their website).

The graph is shown in logarithmic scale due to the extremely pronounced exponential growth since 1960. It depicts the growth in processing cycles required by state-of-the-art ML models. The striking aspect is that while processor clock speeds doubled every 18 months (known as Moore’s Law) until 2010, after 2010, this period shortened to three to four months.

In conclusion, one can consider AI as a branch of ML or use the two terms synonymously. We are aware that this is a non-standard view, as ML is generally considered a subset of AI. The essential point is to understand that we are discussing a new scientific and applicative approach with a markedly ubiquitous character, which affects—and will increasingly affect—every economic sector: an unmissable investment theme for the years ahead.

Disclaimer: This article expresses the personal opinions of the contributors of Custodia Wealth Management who wrote it. It does not constitute investment advice, personalized consulting, or an invitation to perform transactions on financial instruments.